The Monty Hall problem

I’m going through “All of statistics”, and one of the exercises is to work out the probabilities for the Monty Hall problem.

The basic idea is that there are 3 doors, one of which contains a prize, the others have nothing (or a goat, in the original). You choose one door, at which point Monty Hall asks if you’re sure you want that one? To make it more interesting, he chooses one of the other doors that don’t have the prize behind them and opens it. Which means that there are now only two possible doors hiding the prize.

Play time

A picture is worth 1000 words etc., so I made a simulator of the game. You can play about with it to get a feeling for how it works, and you can also run it a lot of times to make use of the law of large numbers to work out whether it’s statistically better to change or not. My first introduction to this problem was in the 90s, via this post-communistic Polish TV game show. The prizes were wonderful - e.g. you could win a selection of meat and ham. Which to be fair, was quite a nice haul in those times… But the main prize, as in the original show, was also a car. So for reasons of historical accuracy, you too, yes You, can win the OG Polish car, the pride and glory of a collaboration between Italy and Poland, none other than the Fiat 126! Here is an example of what is in wait for you!:

The simulator

To switch or not to switch?

From the simulator, it’s quite obvious that switching is the superior tactic. Keeping to the same door results in a prize $\frac {1}{3}$ of the time, while switching wins $\frac {2}{3}$ of the time. This is quite a large difference.

The superiority of switching is not obvious. Most people, when first posed this problem, say that switching and staying will be just as likely to win. After all, there are 2 doors available, so the likelihood of choosing right should be $\frac 1 2$. This caused quite the furore (the article at the link is really heart-warming)in 1990, when Marilyn vos Savant answered a question from a reader whether to stay or change. People for all over wrote in to criticize, with initially only 8% of respondents agreeing with what she had written. Her reaction is praiseworthy - she recommended any teachers reading her column to run experiments during classes and to send her the results. Obviously the results overwhelmingly proved her correct.

How does this work?

Why was she correct though? How can it be that changing the system to only two doors doesn’t reduce the odds of getting it right it to $\frac 1 2$? The magic ingredient is realizing that this isn’t a novel system of 2 doors. If someone new was to wander into the studio after the decoy door was opened, then they would be correct to give even odds on the car being behind either of the doors. But crucially, we have extra information.

Probability isn’t an inherent physical property. It’s a measure of uncertainty. When you say there’s a 50% change of a coin toss coming up heads, you most likely don’t mean that there is a universal law governing coins, enforcing a fair division of results. What is generally meant is that since you don’t have any information to suggest that either side is more likely to come up, neither result will surprise you. Alternatively, you’re saying that if you toss the coin 1000 times, you expect more or less 500 heads and more or less 500 tails. Probability is just a way of mathematically compiling your available information in order to specify how much you expect a given outcome. At least that’s the idea.

An intuitive explanation

The important piece of information that is provided by Monty Hall is him opening a door that:

- is not the door you chose initially

- does not contain the prize

Your initial choice splits the doors into two categories:

- your chosen door, with a $\frac 1 3$ probability of containing the prize

- the other two doors, with a join probability of $\frac 2 3$ of containing the prize

This part should not be controversial - most people would agree that the initial odds of choosing the correct door are $frac 1 3$. A very important part of this is that given the prize is in the second group, you’d have a $\frac 1 2$ chance of picking which one of them has the prize. Examples time:

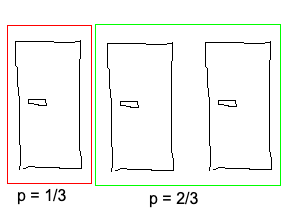

Probabilities for the initial door selection

Lets assume the first door is chosen. That means we can split the doors into the following groups,

with respective probabilities of having the prize of $\frac 1 3$ and $\frac 2 3$:

We can then drill down into the second group, where, assuming that they contain the prize, there

is a $\frac 1 2$ probability that a given door contains the prize:

We can then drill down into the second group, where, assuming that they contain the prize, there

is a $\frac 1 2$ probability that a given door contains the prize:

As a sanity check, if we multiply the probability of the second group containing the prize ($\frac 2 3$)

times the probability of a given door from the second group containing the prize ($\frac 1 2$), we get:

$$p = \frac {2}{3} \cdot \frac {1}{2} = \frac {1}{3}$$

which is what we initially ascribed each door - nice.

As a sanity check, if we multiply the probability of the second group containing the prize ($\frac 2 3$)

times the probability of a given door from the second group containing the prize ($\frac 1 2$), we get:

$$p = \frac {2}{3} \cdot \frac {1}{2} = \frac {1}{3}$$

which is what we initially ascribed each door - nice.

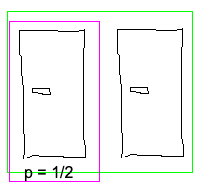

Probabilities after a door is opened

The next step is for Monty Hall to open an unselected door. Once that is done (lets assume it was the second door),

our scene looks like this:

from which it’s obvious that door one has a $\frac 1 3$ probability of containing the prize, while door 3 (in this

example) has a $\frac 2 3$ probability. The main reason this works is that we’re making use of the extra information

that Monty Hall gave us, i.e. that the whole probability mass of the second group is focused on door 3. Again - this

isn’t an inherent property of the system. It’s just us updating our beliefs on the basis of the available information.

If Monty Hall first opened one of the doors, then shuffled the two remaining ones around randomly, we would have lost

the extra information and would then be back to a new, 2 door system, where we should assign 50% chance of either

door containing the prize.

from which it’s obvious that door one has a $\frac 1 3$ probability of containing the prize, while door 3 (in this

example) has a $\frac 2 3$ probability. The main reason this works is that we’re making use of the extra information

that Monty Hall gave us, i.e. that the whole probability mass of the second group is focused on door 3. Again - this

isn’t an inherent property of the system. It’s just us updating our beliefs on the basis of the available information.

If Monty Hall first opened one of the doors, then shuffled the two remaining ones around randomly, we would have lost

the extra information and would then be back to a new, 2 door system, where we should assign 50% chance of either

door containing the prize.

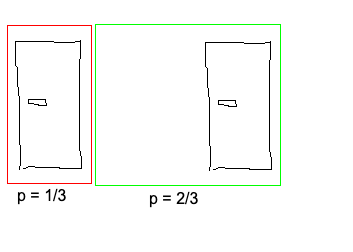

Many worlds interpretation

Another way of approaching this is to imagine 3 parallel worlds, each of which has the prize behind a different door:

Each world is equally likely, so we assign each world a probability of $\frac 1 3$. Lets assume we always pick door 1.

After Monty Hall opens a door, our 3 worlds look like (there are 2 possible ways for the world with door 1):

Each world is equally likely, so we assign each world a probability of $\frac 1 3$. Lets assume we always pick door 1.

After Monty Hall opens a door, our 3 worlds look like (there are 2 possible ways for the world with door 1):

If we are in worlds 2 or 3, changing choices to the other unopened door is a guaranteed win. If we’re in world 1, changing

is a guaranteed loss. Seeing as each world is equally likely, we have two possible worlds in which we win by changing, and

one in which we lose by changing. Which is another way of saying that changing choices has a $\frac 2 3$ probability of winning.

If we are in worlds 2 or 3, changing choices to the other unopened door is a guaranteed win. If we’re in world 1, changing

is a guaranteed loss. Seeing as each world is equally likely, we have two possible worlds in which we win by changing, and

one in which we lose by changing. Which is another way of saying that changing choices has a $\frac 2 3$ probability of winning.

Bayesian probability

Gaining an intuitive understanding is very important, but so is mathematical rigour, to check whether you’re correct. This part was a lot harder for me than the basic intuition. Mainly because I was approaching it wrong…

We’re interested in the probability of a prize being behind a door when switching. For simplicity, we’ll assume that the initial choice is door 1. The system is symmetrical, in the sense that any result that holds for door 1 will also hold for the other doors. Ditto for which door is opened. So to make things easier, lets assume that door 2 is opened. We’ll start with working out the probability for door 1 to contain the prize: $$P(D_{1p}|D_{2o}) = \frac{P(D_{2o}|D_{1p})P(D_{1p})}{P(D_{2o})}$$ Where $D_{1p}$ is that door 1 contains a prize, $D_{2o}$ is door 2 has been opened.

Divide and conquer

- $P(D_{1p})$ is simple to calculate. It’s just the prior probability of door 1 having the prize, i.e. $\frac 1 3$.

- $P(D_{2o}|D_{1p})$ is the probability of door 2 being opened if door 1 has the prize. In this case, either door 2 or door 3 will be picked at random. So the probability is $\frac 1 2$ (seeing as there are 2 doors, one of which is picked).

- $P(D_{2o}|D_{3p})$ is the probability of door 2 being opened if door 3 has the prize. This is 1, seeing as there is no other possible outcome.

$P(D_{2o})$ is the fun one. We can split it into 3 possibilities, one for each door i.e. $$P(D_{2o}) = P(D_{2o}D_{1p}) + P(D_{2o}D_{2p}) + P(D_{2o}D_{3p})$$ This is saying that $P(D_{2o})$ is equal to the probability of one of the following being true:

- Door 1 has the prize and door 2 is opened $\to P(D_{2o}D_{1p}) = P(D_{2o}|D_{1p}) P(D_{1p})$

- Door 2 has the prize and door 2 is opened $\to P(D_{2o}D_{2p}) = P(D_{2o}|D_{2p}) P(D_{2p})$

- Door 3 has the prize and door 2 is opened $\to P(D_{2o}D_{3p}) = P(D_{2o}|D_{3p}) P(D_{3p})$

Understanding conditional probabilities vs joint probabilities

This part was the hardest for me to work out. Initially I assumed that

$$P(D_{2o}) = P(D_{2o}|D_{1p}) + P(D_{2o}|D_{2p}) + P(D_{2o}|D_{3p})$$

Obviously the probability of door 2 being opened depends on which world we’re in, and seeing as they’re mutually exclusive,

we can just add the probabilities of the components to get the overall probability of door 2 being opened. My error was in

stopping at that point, and not taking into account the probability of a given door having the prize. $P(D_{2o}|D_{ip})$ is

all very well, but we mustn’t forget to take into account $P(D_{ip})$. As an example, the probability of being hit by a bullet

can be split into <hit while someone is shooting at you> and <hit while sitting at home> (I assume that those are 2,

exclusive options, and that there are no other ways to be shot - bear with me here). Lets assume that

<hit while someone is shooting at you> is 90%, and <hit while sitting at home> is 1%. Does that mean that the probability

of being hit by a bullet is 91%? Of course not! We have to first scale it by how likely either scenario is - let’s assume

you’re getting into gun fights 1% of the time, and sit at home 80% of the time. With these numbers, the probability of you

getting hit by a bullet is

$$P(b) = \frac {90} {100} \cdot \frac 1 {100} + \frac 1 {100} \cdot \frac {80} {100} = \frac {17} {1000} = 1.7\% $$

Another way of phrasing this is saying that the probability of A is equal to the sum of its sub joint probabilities. At least in the case where they’re exclusive options. i.e. $P(A) = \sum_i^n{P(AB_{i})}$, where $B_i$ are sub cases.

Calculate the components

We’ve already worked out $P(D_{2o}|D_{1p})$. The probability of door 2 being opened if it holds the prize is 0, since only doors that don’t have the prize are opened. $P(D_{2o}|D_{3p}) = 1$, since if one of door 2 and door 3 has to be opened, but door 3 contains the prize, then only door 2 can be opened (as doors with prizes cannot be opened). This gives us: $$P(D_{2o}) = P(D_{2o}|D_{1p})P(D_{1p}) + P(D_{2o}|D_{2p})P(D_{2p}) + P(D_{2o}|D_{3p})P(D_{3p})$$ $$P(D_{2o}) = \frac 1 2 \cdot \frac 1 3 + 0 \cdot \frac 1 3 + 1 \cdot \frac 1 3 = \frac 1 6 + \frac 1 3 = \frac 1 6 + \frac 2 6 = \frac 3 6 = \frac 1 2 $$ Now we have all the components, we can go back to calculating the actual probabilities.

To switch or not to switch, v2

Let’s plug in the numbers to formally calculate whether it’s worth switching: $$P(D_{1p}|D_{2o}) = \frac{P(D_{2o}|D_{1p})P(D_{1p})}{P(D_{2o})} = \frac{\frac 1 2 \frac 1 3}{\frac 1 2} = \frac 1 3$$ $$P(D_{2p}|D_{2o}) = \frac{P(D_{2o}|D_{2p})P(D_{2p})}{P(D_{2o})} = \frac{1 \frac 1 3}{\frac 1 2} = \frac 2 3$$ No surprise there, but it’s always nice to get the correct result.

Extending the results

How about a setup with 10 doors, but the same rules? Is it still worth switching? Let’s again assume that door 1 is picked, but there are much more possible variations in this game. We’ll check 2:

- only one door is opened

- all but one of the unchosen doors will be opened

Only one door opened

We’ll again assume that door 2 is opened - it doesn’t change the results, but makes things clearer. $$P(D_{1p}|D_{2o}) = \frac{P(D_{2o}|D_{1p})P(D_{1p})}{P(D_{2o})}$$

- $P(D_{ip}) = \frac 1 {10}$, since there are 10 doors, each equally likely

- $P(D_{2o}|D_{1p}) = \frac 1 {9}$, since any of the unchosen doors will be picked

- $P(D_{2o}|D_{2p}) = 0$, since it was opened it can’t have had the prize

- $P(D_{2o}|D_{ip}) = \frac 1 8$, for $i > 2$, since after removing door 1 (as it was chosen) and door $i$ (as it has the prize) one of the remaining 8 doors will be chosen $$P(D_{2o}) = \sum_i^{10} {P(D_{2o}|D_{ip})P(D_{ip})} = P(D_{2o}|D_{ip})P(D_{1p}) + P(D_{2o}|D_{ip})P(D_{2p}) + 8 \cdot P(D_{2o}|D_{ip})P(D_{ip}) $$ $$P(D_{2o}) = \frac 1 9 \cdot \frac 1 {10} + 0 \cdot \frac 1 {10} + 8 \cdot \frac 1 8 \frac 1 {10} = \frac 1 {90} + \frac {9} {90} = \frac {10} {90} = \frac 1 {9} $$ And for the actual probabilities:

- $P(D_{1p}|D_{2o}) = \frac{\frac 1 9 \cdot \frac 1 {10}}{\frac 1 9} = \frac 1 {10}$

- $P(D_{ip}|D_{2o}) = \frac{\frac 1 8 \cdot \frac 1 {10}}{\frac 1 9} = \frac 9 {80} \approx \frac 1 9$

In this game it’s also worth switching, but the gains are a lot less obvious. Which is quite intuitive, as the opened door’s probability mass gets divided between all 8 unselected doors.

All but one unchosen door opened

How about the case where all other doors are opened, leaving us with a choice between 2 doors? My intuition says to expect switching to win $\frac 9 {10}$ of the time. To make things easier, we’ll check the probabilities given door 2 isn’t opened. The numbers should work out the same, it’s just easier to manage. $$P(D_{1p}|D_{2c}) = \frac{P(D_{2c}|D_{1p})P(D_{1p})}{P(D_{2c})}$$

- $P(D_{ip}) = \frac 1 {10}$, since there are 10 doors, each equally likely

- $P(D_{2c}|D_{1p}) = \frac 1 {9}$, since any of the unchosen doors will be picked to stay closed

- $P(D_{2c}|D_{2p}) = 1$, since if it has the prize it won’t be opened

- $P(D_{2c}|D_{ip}) = 0$, for $i > 2$, since if a different door contains the prize, door 2 won’t be opened $$P(D_{2o}) = \sum_i^{10} {P(D_{2c}|D_{ip})P(D_{ip})} = P(D_{2c}|D_{ip})P(D_{1p}) + P(D_{2c}|D_{ip})P(D_{2p}) + 8 \cdot P(D_{2c}|D_{ip})P(D_{ip}) $$ $$P(D_{2o}) = \frac 1 9 \cdot \frac 1 {10} + 1 \cdot \frac 1 {10} + 8 \cdot 0 \cdot \frac 1 {10} = \frac 1 {90} + \frac {9} {90} = \frac {10} {90} = \frac 1 {9} $$ And for the actual probabilities:

- $P(D_{1p}|D_{2c}) = \frac{\frac 1 9 \cdot \frac 1 {10}}{\frac 1 9} = \frac 1 {10}$

- $P(D_{2p}|D_{2c}) = \frac{1 \cdot \frac 1 {10}}{\frac 1 9} = \frac 9 {10}$

This is what was expected, which is good.